HOW SEARCH ENGINES WORK: SCANNING, INDEXING AND SORTING

First, be visible.

As we mentioned in Chapter 1, search engines are answering machines. They exist to discover, understand, and organize content on the Internet to deliver the most relevant results to the questions searchers ask.

In order for it to appear in search results, your content must first be visible to search engines. Arguably the most important piece of the SEO puzzle: If your site can't be found, there's no way it will show up in the SERPs (Search Engine Results Page).

How do search engines work?

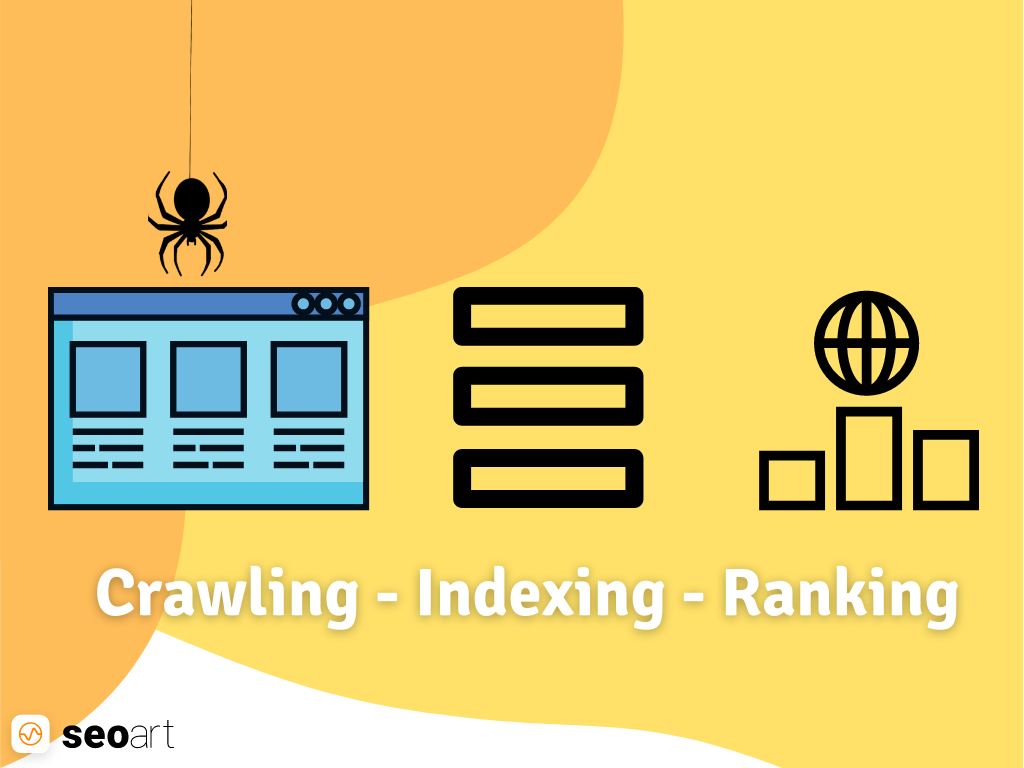

Search engines are mainly three works via:

- Crawl: Searching the internet for content corner by corner, scanning code/content for every URL they find.

- Indexing: Storing and organizing content found during the crawling process.

- Sorting: Providing the content that best answers the searchers' questions by ordering the results from the most relevant to the most irrelevant.

What is search engine scanning?

Crawling is the discovery process performed by a suite of robots (also known as crawlers or crawlers) that search engines send to search for new and updated content. Contents may vary - this is a web page, an image, a video, a PDF or etc. can- however, whatever the format, the contents are discovered through links.

Googlebot, a few" It starts by selecting a web page and finds new URLs by following the links on those pages. The browser bounces off these link paths to find the new content, which is then searched for. Caffeine - the huge database- Adds it to the directory named.

What is a search engine index?

Search engines process and store the information they find in a directory. This directory seems appropriate enough to present to seekers. It is a huge database of content.

Search engine ranking

When someone does a search, the search engine crawls the index for the most relevant content and lets that content pop up in hopes of answering the searcher's questions. This result made according to the level of interest; display is called sorting. In general, the higher a web page ranks, the more relevant the search engine believes that page will be to the query.

It is possible to block some or all of your website to the search engine crawler, or not allow search engines to store certain pages of your site in their index. There may be reasons to prefer this, but if you want your content to be discoverable by searchers, you must make sure that it is accessible and indexable by browsers. Otherwise, your content will be no different than being invisible.

By the end of this section, you will have the necessary knowledge to work with the search engine rather than working against it!

Crawl: Can search engines find your pages?

As you just learned, being crawlable and indexable is a prerequisite for your site to appear in the SERPs. If you already have a website, it might be a good idea to start by looking at how many of your pages are indexed. This will give you excellent information to see if Google is crawling your pages that you want or don't want to find.

One way to control your indexed pages is “site:yourdomain.com” It is an advanced search operator. Go to Google and enter “site:yourdomain.com” in the search box. in summer. This will give Google's indexing information about the specified site:

The numbers of

Google's results are not exact, but they do give you solid insights into which pages on your site are indexed and how they appear up-to-date in search results.

>

View and use Google Search Console Index Coverage for more accurate results. If you don't have one, you can create a Google Search Console account for free. With this tool, you can create sitemaps for your site, among other things. bee can send it and actually escape to the Google directory. You can see that your page is indexed.

If you don't appear in the search results, here are a few of them; may be the reason;

- Your site is too new and not yet crawled by crawlers

- Your site is not affiliated with any other site

- Your site's navigation makes it difficult for a crawler to crawl it effectively

- Your site contains code called basic browser guidelines that block search engines

- Your site has been disrupted by Google for spam tactics

Tell search engines how to crawl your site

If Google Search Console or “site:domain.com” advanced search operator if you have used it and öan important few; If you find that your page is missing from the index and/or your unimportant pages are being indexed by mistake, there are some optimizations you can direct Googlebot to so that your content is crawled the way you want. Telling search engines how to crawl your site gives you better control over what gets indexed.

Most people want to be sure that their important pages can be found by Google, but forget that there are pages that Googlebot does not want to be found by Googlebot. These are old URLs with sparse content, repetitive URLs (for e-commerce). such as ranking filtering parameters used), special promo code pages, staging or testing pages, and its derivative.

Use robot.txt to drive Googlebot away from certain pages and sections of your site.

Robot.txt

Robot.txt files are located in the root directory of websites (for example: yourdomain.com/robot.txt), and thanks to the specific robot.txt guidelines, you can determine which parts of your site should be crawled and not crawled by search engines. ömakes suggestions.

How does Googlebot handle robot.txt files

- If robot cannot find the.txt file, Googlebot continues to crawl the site

- If Googlebot finds a robot.txt file, it will usually crawl &ooml;recommendations

- Googlebot will not crawl the site if it encounters an error accessing the robot.txt file of the site or cannot determine whether it exists

Not all websites consider robot.txt. Malicious people (for example: email address scrapers) use this protocol; skipped boots do. Some malicious people even use robot.txt files to get to the location of your personal content. Although it makes sense to prevent crawlers from accessing your personal pages, such as admin and login, so that they do not appear in the directory, placing the locations of these URLs in public robot.txt files means that malicious people can detect them more easily. Excludes these pages; It's safer to leave it behind and protect login forms behind than placing them in a robot.txt file.

Defining Google Search Console URL parameters

Some sites (usually in e-commerce) contain the same content It adds certain parameters to these URLs so that they can be used in multiple URLs. If you've shopped online before, you've probably narrowed down your search results with the help of filters. “For example, on Amazon”, “shoes”; You may have searched and narrowed down your results in terms of size, color and style. The URL changes slightly with each collapse:

https://www.example.com/products/women/dresses/green.htmhttps://www.example.com/products/women?category=dresses&color=greenhttps://example.com/shopindex .php?product_id=32&highlight=green+dress&cat_id=1&sessionid=123$affid=43

How does Googlel know what type of URL to serve to the searcher? Google does a pretty good job of determining the representative URL on its own, but in Google Search Console; By using the URL parameters feature, you can more accurately determine Google's approach to your pages. If this feature allows Googlebot to "crawl URLs with parameters _ _ _"; If you use the command to give the command, you are choosing to hide this content from Googlebot, which may result in those pages getting lost in search results. You'll want to do this if those parameters create repetitive pages. However, this is ideal if you want those pages to be indexed.uml;ntem is not.

Can browsers find all your important content?

Now, here are a few questions about keeping search engine crawlers away from your junk content. Now that you know the tactics, let's learn about optimizations that help Googlebot find your important pages.

Sometimes, a search engine can crawl some parts of your site, but other parts may be hidden for one reason or another. It is very important to ensure that not only your homepage but also the content you want to be indexed is found by search engines.

Ask yourself this: Can the bot crawl from inside your website, not just up to it?

Is your content hidden behind login forms?

If your users need to log in, fill out forms, or answer questions in order to access certain content, search engines will not see those protected pages. A browser will never log in.

Do you trust search forms?

Robots cannot use search forms. Some people believe that by placing search boxes on their sites, search engines can find whatever users are looking for.

Is the text hidden in the non-text content?

Non-text content types (images, video, GIF, etc.) should not be used to display text that is intended to be indexed. Although search engines are improving in recognizing and reading images, there is no guarantee that they will be able to understand and read them all yet. For the markup of your web page

it is best to add.

Can search engines track your site's navigation?

Your site needs a way that guides you from page to page, just as browsers need to use link paths on other sites so that they can discover your site. hears. You have a page that you want search engines to find, but if it's not linked to another page, it doesn't matter if that page is invisible. Many sites make critical mistakes when configuring their navigation, which search engines cannot reach and which will prevent the site from appearing in search results.

Common navigation errors that prevent crawlers from seeing your entire site

- Desktop; have a mobile navigation that gives different results than the version of

- menu such as JavaScript-enabled navigations Any type of navigation where "elements" are not in HTML. While Google has improved in understanding and crawling Javascript, this is still an excellent process; is not. The best way to get something to be found, understood and indexed by Google is to embed it in HTML.

- Personalization or a unique type of navigation for the visitor compared to others; displaying may clog search engine crawlers

- Forgetting to connect with someone on the homepages of your website via navigation. Remember, links are paths crawlers use to new pages!

This is why it's important for your website to have clean navigation and helpful URL file structures.

Do you have a clean information architecture?

Information architecture is optimizations and tagging applied to increase the efficiency and availability of the content on a website for users. The best information architecture is intuitive, meaning users shouldn't have to think much about navigating or finding something to navigate your website.

Do you use sitemaps?

A sitemap, just as the name suggests, is a list of URLs that crawlers use to discover and index the content on your site. The easiest way to ensure that Google can find your top priority pages is to create a file that conforms to Google's standards and submit it through Google Search Console. While submitting a sitemap doesn't eliminate the need for navigation, it does help crawlers follow the path to your important pages.

Even if your site is not linked to another site, you can have the opportunity to have it indexed by sending your XML sitemap to Google Search Console. While there's no guarantee they'll index a submitted URL, it's worth a try!

Do browsers encounter errors when accessing URLs?

When crawling URLs on your site, crawlers may encounter errors.ir. “Browser Errors” in Google Search Console; can detect which URL's are causing these errors. The report it will give you is “not found” it will show server errors, not errors. Server input files also show other information that is like a treasure trove of information, as if the scan was tight. Accessing server input files is a more advanced tactic, so this is the beginning. we will not tell in the guide.

Before the browser does anything notable with the error reports, "server errors" and "cannot be found" will appear. Understanding your mistakes is öimportant.

4xx Codes: When search engine crawlers cannot access your content due to client error

4xx errors are client errors. That is, the requested URL contains bad syntax or cannot be fulfilled. One of the most common 4xx errors is “404 not found”. is a mistake. Examples of these include errors that consist of a URL typo, a deleted page, or a broken redirect face. When search engines encounter a 404, they cannot access the URL. When users encounter a 404, they may be disappointed and leave.

5xx Codes: When search engine crawlers cannot find your content because of a server error

5xx errors are server errors. That is, the server on which the web page is located fails to meet the requests of the searcher or search engine. Google Search Console's "Browser Error" There is a tab dedicated to these errors in the report. These usually occur when the URL request times out and Googlebot stops requesting it. See Google's documentation to learn more about solving server connection problems.

Fortunately, there is a way to tell searchers and search engines that your page has been moved: a 301 (permanent)redirect.

Let's say your page is “öexample.com/genç-dogs/” from the address “öexample.com/pup-dogs/” You have moved it to your address. An early bridge for search engines and users to migrate from the old URL to the new one; will need to use. That bridge 301 is a redirect.

The

301 status code itself indicates that the page has been permanently moved to a new location. So avoid redirecting URLs to irrelevant pages, where the old URL content no longer exists. If a page is being queued for query and you 301 redirect it to another URL with different content, that page will drop to a lower position in the rankings because it no longer has the content that makes it rank. it can happen. The 301s are pretty powerful, so use them carefully!

Additionally you have the option 302 to re-orient a page, but this option should be kept for temporary migrations and situations where link equality is not a problem. In a way, the 302's are like a service road. You are rerouting traffic on a certain path for a temporary period, but this is not permanent.

Once you're sure you've optimized your site for crawlability, the next thing to do is to make sure it's indexable.

Indexing: How do search engines evaluate and store your pages?

After making sure your site is crawled, the next thing to do is to make sure it's indexable. Yes that's right, just because your site is discoverable by search engines doesn't necessarily mean it will be stored in their index. As in the previous section about crawling, we explained how search engines discover your web pages. The directory is where your discovered pages are stored. Once the crawler finds a page, the search engine processes it just as browser programs would. During this process, the search engine parses the contents of that page. All of this information is stored in the directory. Read on to learn how indexing works and how to ensure your site has access to this very important database.

How a Googlebot crawler sees my pages; Can I see?

Yes, the cached version of your page is the image from Googlebot's last crawl of your page; will reflect. Google crawls and caches web pages at different frequencies. https://www.nytimes.com the root that makes frequent posts like and well-known sites Seoart's ythe moment is http://www.keyword.dev (wish it was real…) and less It will be crawled more frequently than known sites.

To see what the cached version of your website looks like, click the down arrow next to the URL in the SERP and click the "Cache". You can choose the option.

At the same time, only the text version of your website; You can view if your important content is being scanned and effectively cached.

No&c from the pages directory; will it be removed?

Yes, pages can be removed from the index! Here are some of the top reasons why a URL might be removed:

- URL, a “not found” error (4xx) or server error (5xx). This could be accidental (the page was moved but a 301 redirect was not set up) or intentional (the page was deleted and a 404 was used to remove it from the index). The

- URL has a non-indexable meta tag. This tag may be an instruction given to search engines by site owners to skip indexing that page.

- URL was manually penalized for violating the search engine's Webmaster Guide and was subsequently removed from the index

- URL blocked from crawling visitors with a password added as required before accessing the page

If you think that a page of your website that was previously in Google's index no longer appears in the index, you can use the URL Inspection tool to see the status of the page, or you can use the URL Inspection tool that indexes the URLs one by one. “Request Indexing” in Fetch as Google You can use the feature. (Bonus: Google Search Console's "fetch" tool also lets you view potential issues with how Google interprets your page with the "render" option)

Tell search engines how to index your site

Robots meta guidelines

Meta guidelines (or "meta tags") are guidelines you can give to search engines about how to read your web page.

Search engine crawlers "exclude this page in search results; leave” or “Don't give any link value to the links on the page” You can say things like. These guidelines apply to your HTML page

It is implemented via the Robots Meta Tags in the

section (most commonly used) or via the X-Robots-Tag in the HTTP header.

Robots meta tag

Robots meta tag in the HTML part of your web page

It can be used in

. all or excluding certain search engines; may leave. Below are the most commonly used meta guidelines, with information on when you can apply them.

index/noindex instructs whether the page should be crawled and stored to be retrieved from the search engine's index. If “except&cced; leave” If you select the option, you tell crawlers that you want the page to be removed from the search results. By default, search engines think they can index all pages. That's why “index” It is unnecessary to use the value.

- In this case, you can use: If you want to trim the sparse pages of your site from Google's index, but at the same time make them accessible to visitors, then you can use those pages as “exceptç leave” You can choose as.

follow/nofollow: Tells search engines whether to follow links on the page. “Follow” allows bots to follow the links on your page and ensure link equality through those URLs. “Nofollow” When you choose the option, search engines will search on the page. will not follow links and will not give link equality. By default all pages are "follow" It is thought to have the feature.

- In this case you can use: nofollow is often used with the noindex option to prevent a page from being indexed and links on the page crawled.

noarchive, restricts search engines from keeping a cache copy of the page. By default, moSearches store visible copies of all the pages they index and provide searchers with access to search results via the cached link.

- In this case, you can use: If you run an e-commerce site and your prices change regularly, the noarchive tag is to prevent searchers from seeing outdated pricing. You can use ;in.

An example of meta robots noindex and nofollow tag:

...

This example prevents all search engines from indexing your page and following any links in it.

X-Robots-Tag

The x-robots tag used in the HTTP header inside your

URL provides more flexibility and functionality than meta tags, so you can use search engines in a wide range when you want to use regular expressions, block non-HTML files, and use noindex tags throughout the site. It is used when you want to block the appendix.

For example, you can easily exclude all folders or file types; you can leave it (seoart.com/no-index):

Or certain file types (like PDFs):

For more information about Meta Robot Tags, you can review Google's Robots Meta Tag Specifications.

Understanding the different ways you can affect crawling and indexing will help you avoid common mistakes that prevent your important pages from being found.

Ranking: How do search engines rank URLs?

How can search engines show relevant results after someone makes a query in the search box? This process is known as sorting or arranging the search results from the most relevant to the most irrelevant to the query specific to the query.

Search engines use processes and formulas called algorithms to fetch and meaningfully sort the stored information to determine its relevance.

In order to improve the quality of search results, these algorithms have undergone many changes over the years. For example, Google. Some are minor fixes while others are daily algorithm targeting broad/broad topics, just like Penguin made to deal with link spam makes the changes. See our Google Algorithm Change History records, a list of approved and unapproved Google updates going back to 2000.

Why does the algorithm change so often? I wonder if Google is trying to keep us on the alert. Although it doesn't always explain what changes it made and for what purpose, we know that Google's goal when making algorithm changes is to improve the quality of search results in general. That's why we always make Google "Quality enhancement updates" in response to algorithm changes questions. gives answers like This means that if your site is experiencing problems after an algorithm change, compare it to Google’s Quality Guidelines or Search Quality Rater Guidelines . Both are pretty self-explanatory about what search engines want.

What do search engines want?

Search engines always want the same thing: to provide useful answers to searchers' queries in the most helpful formats. So if that's true, why does SEO look different today than it did years ago?

Think of it like someone learning a new language. At first, his understanding of that language will be quite simple. Over time, they will begin to have a deeper understanding and will learn the semantics, namely the relationship between words and idioms behind the language. Eventually, with enough practice, the student will be able to understand the language down to the smallest detail and be able to answer even vague and incomplete questions.

When the search engines were just starting to learn our language, it was easier to play with the system by using tactics and cheats by going against the quality guidelines. Consider the keyword stuffing you don't want. With a specific key word If you wanted to increase your place in the ranking, “funny jokes”; Like, words repeatedly and boldly on your pageJust use it like this:

“Funny jokes Welcome! We say the most funny jokes "the most funny jokes in the world. Funny jokes are fun and crazy. Your funny jokes< /strong> waiting for you. Sit back and read the funny jokes . "Because&; funny jokes makes you happy and laughs. Some of the most loved funny jokes.”

Rather than laughing at funny jokes, this tactic has people bombarded with annoying, hard-to-read texts, and it sucks provided a user experience. While this tactic has worked in the past, it's never what the search engines wanted.

The role of links in SEO

When we talk about links, we may be talking about two things. Internal backlinks or “inbound links” They are links that lead to your site from other websites.

Links have historically played a large role in SEO. Early on, search engines needed help determining which URLs are trustworthy over others when ranking search results; he could hear. Calculating the number of links leading to any site helped them with this.

Backlinks are real-life “word of mouth” very similar to the phenomenon. Let's take an imaginary coffee shop, as an example, Jenny's K:

-

- References from others= a sign of good authority

Example: All the other people have told you that Jenny's Coffee is the best in town.

- References from others= a sign of good authority

- References from you=sign of biased, not-so-good authority

'Example: Jenny claims that Jenny's Coffee' is the best in town.

- References from irrelevant or low quality sources=not a sign of good authority and may even get you marked as spam.

&Example: Jenny, never go to the coffee shop; He gave money to people who had not been there to tell him how good it was.

- No reference=uncertain authority

"Example: Jenny’s Coffee may be nice, but you couldn't find anyone who offered any opinion on it.

This is why PageRank was created. PageRank (the algorithm that is part of Google's core) is a link analysis algorithm named after one of Google's founders, Larry Page. PageRank determines the importance of a website by measuring the quality and quantity of links that lead to it. The assumption is that the more relevant, important, and trusted a website is, the more links it will earn.

The more backlinks you get from high authority (trusted) websites, the better your chances of ranking in search results.

The role content plays in SEO

Links wouldn't make any sense if they didn't direct searchers to something. Here is something content! Content is more than words; anything that is expected to be consumed by seekers. Video content, image The content and of course the text. If search engines are answer machines, then content is the means by which the engines deliver those answers.

There are thousands of possible results whenever someone does a search. It comes out. So how do search engines decide which pages searchers might find valuable? A large part of the decision about where your page will rank is determined by the consistency of your page's content with the query's purpose and that query. In other words, does this page match the searched words or help the searcher do the task they are trying to complete?

As a result of this focus on user satisfaction and task completion, there is no fixed benchmark for the length of your content, how much it contains keywords, or what you put in title tags. All of this can play a role in how a page performs in searches, but the focus should be on the users who will be reading the page.

Bug;n is the first three among hundreds or even thousands of sort marks; It has remained relatively consistent: links to your website (used as a third-party credibility mark), content on the page (quality content that matches the searcher's intentions), and BrainBrain .

What is RankBrain?

RankBrain is the machine learning component of Google's core algorithm. Machine learning is a computer program that improves its predictions with new observations and training data. In other words, he is always learning, and because he is always learning, his search results are constantly improving.

For example, if RankBrain finds that a URL from lower rankings gives users better results compared to top rankings, it will move the more relevant results up and the less relevant ones down as alternative results by RankBrain. You can assume.

Like most things about search engines, we don't know what RankBrain includes, like Google employees do.

What does this mean for SEO?

We must focus more than ever on focusing and meeting user requests, as Google will continue to use RankBrain to drive a more relevant and more useful result. Provide the best possible knowledge and experience for users who might come across your page. Thus, you would have taken the first big step needed to perform better in the world of RankBrain.

Engagement metrics: correlation, causality or both?

In Google rankings, engagement metrics are most likely partly correlational and partly causal.

When we say engagement metrics, we're talking about the data presented on how searchers interact with your site from search results. These include things like:

- Clicks (visits from search)

- Time spent on the page (the time users spent on that page before leaving the page)

- Leave rate (percentage of all website sessions where users viewed only one page)

- Pogo-sticking (after clicking on an organic result, quickly return to SERP and select another result)

Many tests, including Seoart's own ranking factors survey, showed engagement metrics correlated with higher rankings, but causation was hotly debated. Are good engagement metrics just indicative of sites ranking high? Or do top-ranked sites have good engagement metrics?

Here's what Google said

Never “direct sort mark” Although they didn't use the phrase, Google has been clear that it uses click data to modify the SERPs for certain queries.

As Google's former Head of Search Quality Udi Manber said:

“My rank itself is affected by click data. If, in a given query, 80% of people click on #2 and only 10% click on #1, after a while we realize that what people want is ##2, and we change it.”

Another comment from former Google engineer Edmond Lau confirms this:

“Any sensible search engine has its own It is clear that they will use the click data from their results. Current mechanics for how click data is used are often proprietary. However, Google is transparent about using registrations and click data in systems such as rank-adjusted content.”

Because Google needs to improve and maintain search quality, engagement metrics are inevitably more than just correlations. However, Google's participation metrics are "ranking marks"; It seems that it is insufficient in naming it as o metrics are used to improve search quality and the fact that URLs are ranked one by one is a side effect of this.

Tests confirm

Equals testing confirms that Google is changing SERPs in response to searcher engagement:

- Rank Fishkin'in 2014&The test in the rsquo;s test resulted in the #7 result increasing to #1 with around 200 people clicking on the URL in the SERP. Interesting; Somehow, ranking growth seemed isolated to the location of people visiting the link. While the ranking position is ranked in the USA, where there are many participants, Google Canada, Google Australia, etc. Ranking of pages remained below.

- Larry Kim's comparison of the top pages and the average time spent on those pages before and after RankBrain ranks the pages where the machine learning component of the Google algorithm doesn't take too long for people to rank down it appeared to download.

- Darren Shaw's test showed the impact of user behavior on local search and map package results.

SERPs should also be optimized for engagement, as user engagement metrics are obviously used to regulate quality and ranking position changes that have side-effects on SERPs. Rather than changing the objective quality of your web page, engagement changes your value to users compared to other results of that query. This is why, with no changes made to your page and its backlinks, users' behavior may drop in rankings if their behavior indicates they like other pages more.

Engagement metrics act as a fact checker when it comes to ranking web pages. Objective factors such as links and content rank the page first, and then engagement metrics help Google in editing it if it's not understood correctly.

Evolution of search results

When search engines lack the sophistication they have today, “10 blue links”; The expression was coined to describe the uniformity of SERP. At any time a search is made, Google will find 10 organic results, each with the same format. would show.

Keeping #1 in this search landscape has been a boon for SEO. But then something happened. Google, the result started to add new results called SERP Features with new formats to its pages. Some SERP features include:

- Üpaid ads

- Öwhat çextracted snippets

- “People searched for these too” tiles

- Local (map) pack

- Knowledge panel

- Sitelinks

Google keeps adding new ones. Even more results below, where a single result from the Knowledge Graph is displayed on the SERPs; “image” "end-zero", where no other option is given but SERPs” They experimented with a phenomenon called.

The inclusion of these features is for two main reasons. It caused panic at first. The former caused organic results to be pushed further down the SERP. The other side effect was that users clicked less on organic results because SERP gave the answers itself.

So why did Google do such a thing? The reasons for this go back to the search experience. User behavior shows that some queries are better served with different formats. Notice how different types of SERP properties match different types of queries.

Chapter 3 is for the purpose; We'll talk more about it. But for now, it's important to know that answers can be delivered to callers in many different ways, and how the way you structure your content affects how it appears in search.

Localized search

A search engine like Google has its own local business directory listing, where it generates local search results.

If for businesses that have a physical location that customers can visit (e.g. dentist) or for a business (e.g. plumbing) traveling to reach their customers If you're doing SEO, make sure you request and get approved for a free Google My Business Listing.

Google, when we come to localized search results, it is used to determine the ranking; uses the main factor:

- Relevance

- Distance

- Awareness

Relevance

Relevance is concerned with how well the local business matches what the searcher is looking for. Assume the business is doing everything it can to be relevant to searchers.To be in, make sure the business's information is complete and correct.

Distance

Google uses your geographic location to better serve you. Local search results are sensitive to the proximity of the user or the location specified in the query (if the searcher specified it).

Awareness

Google aims to reward well-known businesses in real life with the awareness factor. In addition to the offline reputation of the business, Google also takes into account some factors such as the evolution of the business when doing the local ranking:

Reviews

The number of Google reviews a local business receives and the responsiveness of those reviews have a significant impact on how businesses rank in local results.

Quotes

A “business quote” or “business listing” is a web-based reference to the “NAP” (name, address, phone number) of the business on a localized platform (Yelp, Acxiom, YP, Infogroup, Localeze, etc.).

Local rankings are affected by the number and consistency of local business citations. Google builds its local business directory with data it constantly pulls from a wide range of sources. When Google finds multiple consistent references to a business's name, location, and phone number, Google's "confidence" in that business's authenticity; it gets stronger. This then causes Google to display the business with higher confidence. Google also uses information from other sources on the web, such as links and articles.

Organic sort

SEO best practices also apply to local SEO, as Google also considers a website's position in organic search results in local search results.

In the next section, you'll learn about on-page best practices to help Google and users better understand your content.

[Bonus!] Local participation

Although not listed as a local ranking factor by Google, the role of engagement is; will continue to grow over time. Google continues to enrich local results with real-world data such as popular times to visit and average visit times.

…and in addition, it allows searchers to ask questions to the business!

Never; of course, local results have never been seen before. It is affected by real world data as much as it is not. This interaction is how searchers interact with and respond to businesses, rather than just hard information such as links and citations.

Since Google wants to present the best local businesses that are most relevant to searchers, it makes a lot of sense for Google to use real-time engagement metrics to determine quality and relevance.

“SEO requires attention and patience. To be successful, follow the changing technological developments closely.”

– SEOART TEAM

The beginning here isç By following these seven steps with our guide, we can achieve a successful SEO:

- Getting Started Guide : Crawl accessibility so engines can read your website

- SEO 101 : Engaging content that answers searchers' questions

- SEO Glossary : Snippet/schema markup to stand out in SERPs

- Keyword Research : Keyword research is a vital part of SEO that can help you to drive more traffic and conversions to your website. By understanding your target audience's search behavior and optimizing your content with the right keywords and phrases, you can improve your website's visibility and ranking in search results. So, start your keyword research today and see the difference it can make to your SEO efforts.

- Browsing, Indexing, Sorting : Keyword optimized to attract searchers and search engines

- On-Page SEO : Share-worthy content winning links, quotes, and amplifications

- Technical SEO : Title, URL and description to achieve high CTR in rankings

- Link Building and Authority : Snippet/schema markup to stand out in SERPs

- Measurement and Monitoring : Measurement and Monitoring

- :

- :

- :

- :

- :

- :

- Interaction to Next Paint (INP) :

- :

- :

- :

- :

- :

You may also be interested in

Find Out Your SEO Score!

Sign up for our e-newsletter form and confirm your e-mail address to be one of the first to access the newsletters we prepare free of charge as seoart.

Fill out the form to get detailed information about search engine optimization.ABOUT Seoart